Software Professionalism on the Force.com Platform

Last Thursday, July 10, 2014, I spoke at the Lehigh Valley Salesforce Developer User Group (@LVSFDCDUG) on “Clean Code and Software Professionalism on the Force.com Platform”. In this article, I would like to focus on the first half of my presentation on Software Professionalism. If you are interested, please review the slides I have posted to the meetup.

Are you a professional? That is a question everyone has to personally answer. However, I would argue that we as an industry must also ask ourselves that. Typically, professionals are thought of as dedicated, highly talented experts in their field who rarely make mistakes. For instance, a doctor is typically thought of as a professional. They handle their patient interactions with proper care and do their absolute best to never make any mistakes. That is a crucial to their overall success.

Also, take into consideration a profession like an accountant. Accountants utilize a system called double entry bookkeeping. Essentially what this means is that for every entry an accountant needs to have an opposing entry for error protection. Now that is professional.

Developers, on the other hand, are typically laid back individuals wearing tech t-shirts to work and honestly not taking ourselves very seriously. We like to have fun. We like nerdy things. To be honest, all of that is perfectly fine too. That doesn’t necessarily define professionalism. However, I want everyone to take a deep look into their past work and ask yourself one question, “have I always done the absolute best work I could?” The answer is probably not. Sometimes deadlines take over or other life issues get in the way of some extra work you planned to do. In the past, you may have moved code along you weren’t 100% on because it just had to go. However, that isn’t professional. We need to change that.

We need to be professionals. The fact is, the world really runs on code people like you or I have written. Think about it for a second. Whatever device you are using to read this article, an elevator you may ride to work everyday, or even the brakes in your car that you expect to stop when you press the pedal. We depend on software for every aspect of our lives. Additionally, so many businesses rely on software developers, and those who are able to offer Microsoft Dynamics 365 Implementation Services, for example, to ensure they can operate. Most businesses will use software for everything, from their administrative duties to their sales reports, so it’s important that developers create software that can be used for whatever computing environment a client is using. To ensure that all clients can use the software, many developers will use Mirantis Kubernetes Engine to ensure that software is universal and that it is efficient at runtime. Developers try their best to make sure the software is accessible and useful for all of the people who are using it, however, there will be times where some problems may arise. When this happens, most businesses tend to contact IT companies in their local area to see if they can offer any help. Normally, IT companies are extremely useful in these scenarios and they can usually assess the issue and suggest recommendations on overcoming these problems. Maybe some businesses could contact an Established IT company in Hertfordshire if they are located in that area. That would be useful.

I think I speak for everyone when I say that we’re so grateful to the companies who sell these types of software to those of us who need it. I understand that the process of setting up a site to start selling is relatively simple and easy, especially if you utilize the professional services from somewhere like FastSpring (https://fastspring.com/solutions/selling-software-online/) that enables you to create the best checkout experience for your clients. And when things like this exist, the options that we have when it comes to software is limitless, and it’s a good job really because it’s all around us.

How scary would it be that you rely on software that may have been written at some point by some young 22 year old kid, 14 hours into the work day, just pushing tasks along to keep up the pace. Is that really the type of dedication you want to see on a system like your car’s brakes? Of course not. Now, not every situation we run into is that serious. Especially on the Salesforce platform. However, there are massive consequences to what we do.

Knight Capital Group ran into a small computer glitch a few years ago. During a span of less than an hour, Knight Capital Group lost over $440 million. Unfortunately, because of this issue, the company lost a large majority of their assets and eventually were purchased by another company. Think about that for a second. A huge company, performing very well, and all of a sudden they lose almost everything, just from some computer glitch. Think about all of the workers at that company that potentially lost their jobs. Think about all of your own co-workers. They depend on their jobs to provide for their families. It is your responsibility to do your best to never let issues like that pop up. You must focus and never let anything slip past to ensure your own company doesn’t suffer a similar fate. With that said, this isn’t even the worst thing that can happen. What if the stakes were higher?

Back in the 1980s, there was a device called the Therac-25. The Therac-25 was a radiation therapy machine that had a few issues between 1985 and 1987. Unfortunately, those glitches resulted in several patients receiving several hundred times the prescribed amount of radiation. Sadly, within a few days those patients suffered radiation poisoning and passed away. In this scenario, that person put their life in the hands of a developer who worked on that machine. They trusted it, but unfortunately it didn’t work.

What about an even bigger scenario? Back during the Cold War, both the United States and Russia experienced computer glitches that could have sparked World War III. In both scenarios, missile detection systems incorrectly reported that each nation was being attacked. Think about the catastrophic consequences that could have occurred just because a mistake with a computer.

So, how do we combat this problem? Well, simply put, we need to be professional!

First, we must demand quality. Unparalleled quality to be specific. The truth is, we should never have a release or ship a product/project to a customer if it doesn’t match our high quality standards. We are the ultimate gatekeepers when it comes to handling quality. We are the ones responsible for maintaining and ensuring that quality.

I know what you may be thinking. This all sounds great in a perfect world where time isn’t a factor. Businesses sometimes make decisions to cut back on certain projects. Say, for instance, the project manager or your boss comes up to you and tells you “we need to get this out the door”. They tell you they want to cut time, “so let’s not write unit tests”. This is a scenario I have seen and experienced before. At the end of the day, ultimately it is not always in your control how much time you have to perform a task. However, as a professional, it is expected that you work with the business to explain why certain things are important. Unit tests for instance are something you simply can’t skimp on because of long term repercussions of doing so. However, if the business still decides to cut your time, you at least did your job warning them of the consequences of cutting back on the necessary time. At that point, there is nothing you can do but stay professional and give the best possible product within the constraints you were given.

As professionals, we are expected to be ready by having our code in a stable state to be able to react to business needs. In other words, we need to be agile! There are several different development methodologies out there, but I am a big advocate of Agile. The general idea behind it is that as you develop, you keep your code and your progress advancing through small iterative chunks. At the end of the day, this allows flexibility for the business. It has several other important benefits, but for now the focus is that it allows for quick reactions by the business.

For instance, say you are given a chunk of requirements that may take you years to complete. When you begin working on it, it is crucial to focus on the most important aspects of those requirements and get them working as soon as possible. At the end of every sprint, your code should be ready to go to production, even if the business doesn’t need it at the time. There may come a point, maybe 9 months down the line, where the business determines there may be better ROI on a different project. They don’t want to waste more time on this current effort, but there will be problems if you turn around and say it make take several months to get the code into a production ready state. Your development methodology should have code being continually tested and continually stable at the end of every sprint. This means don’t work on a bunch of functionality, then skimp on unit tests planning to write all of them later in the project. On top of a lack of flexibility, that is just a bad practice in general as well.

While maintaining flexibility and quality, it is also important to maintain a certain level of productivity. Your progress should never slow down the longer you work on a project. There should never be a point where less features can be added due to complexity inside the application. Essentially what this means, is that your code should never resemble “spaghetti code”. If you don’t know what “spaghetti code” is, it is basically a chunk of code that is very difficult to read and/or modify because it consists of a bunch of different hacks to just get it working. On top of that, it almost never has any clear documentation or unit tests. That makes ensuring the current functionality doesn’t break when you make a modification extremely difficult. So, how do you handle this?

Well, let’s start off with the easy one first. If you are starting on this code base from scratch, simply maintain the level of quality you expect and this should never be a problem. Now, for the harder part, if you inherit a code base that is jumbled together like this, the first thing you need to do is start adding unit tests. Use your unit tests to verify functionality and to allow you some flexibility when it comes time to refactor. As you progress, with more unit testing and more refactoring, your productivity should go up. The most important thing to do here is to make sure that whenever you touch a piece of code, that code is better off after you are done. As long as you do that, this situation should never come up.

As you continue to work, you should never have any excuses and you should never be paralyzed by fear. As I noted above, unit tests are so vital to this effort that it can’t be overstated. They will save you. By utilizing a TDD methodology, you should always be able to run your unit tests and verify your code works against the intended functionality. Now I understand TDD is basically impossible on the Force.com platform because of the limitations around the speed in which unit tests can run and compile. However, you can still do a modified version of TDD. The overall goal of TDD is excellent code coverage, not just line coverage. It is important that your tests are scenario based. This means that you tests should cover all possible scenarios of how that code will run. This is very different than the minimum Salesforce requires because that only covers line coverage. You need to account for branch coverage as well as having very strong assert statements. This is what will allow you to refactor and modify the code with no fear because you can just click a button and verify the functionality works whenever you want. Without that reassurance, this will be very difficult and eventually your code will fall into the inevitable category of “spaghetti code”.

QA should fear you. This slide kind of speaks for itself. At the end of the day, if you are maintaining the highest level of quality, QA should wonder everyday why they still have jobs. I am obviously not advocating at all that QA isn’t a vital role. What I am saying here is that the developer should burden the responsibility to provide QA the best experience possible. Sometimes, as I have seen, QA can be a security blanket for the developer where the developer feels they can let substandard code by because QA will catch it and give them more time to work on it during “bug fixing time”. This is a terrible model and one that is not sustainable. Specifically due to the fact that this abuses the reason why QA exists. QA is not a means of pushing off work for another time.

I also want to point out that there will be times when a bug or issue gets through to QA. It is understandable, and nothing is error free. With that said, it should be a bit more traumatic experience than it sometimes is. When a bug gets discovered, you should wonder what went wrong and find the problem in your process so it doesn’t happen again. Continually tweak how you approach your work to try and achieve the perfect process. With that said, if you have QA returning lists consisting of 100+ bugs, you have massive problems and should rethink your whole strategy.

One of the most difficult things for a developer to do is provide estimates. I suspect the reason for this is because of how developers like to think. Developers work with computers. Computers only do exactly what they are told to do. On the other hand, estimates are anything but science. They are not specific and ultimately involve a bit of guess work. There are ways to minimize that guess work and I’d like to talk about that now.

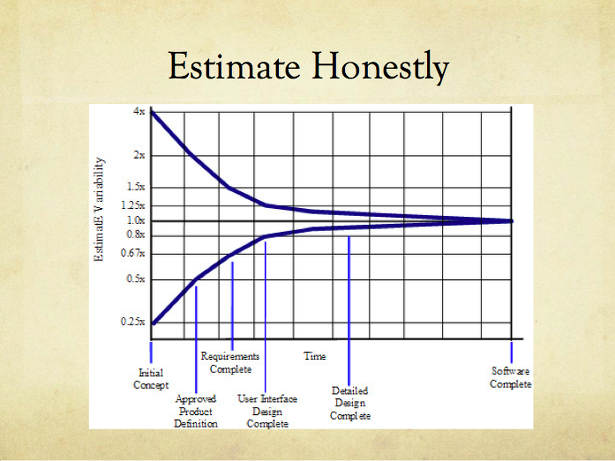

In the slide above, you will see a graph called the Cone of Uncertainty. The Cone of Uncertainty provides a means for providing an accurate range on a piece of work. The general idea behind it is that at any point during the process, you should be able to generate a range on how much time you will need to complete a task. It is very important to note that it is a range, not a single time. It is always a range until the project is complete. The fact of it is that there will always be some level of uncertainty while a project is in progress. This makes it impossible to give a specific time. So, if you come into a scenario where you estimate out a task and tell your boss/project manager that it will take you 40 hours to complete it, you are essentially lying. There is no way you can know it will take exactly 40 hours. If you are past the User Interface Design, you should be able to give a range with a factor of .8 and 1.2. So, with 40 hours as the estimate you came up with, you can reasonably say that the task will take between 32 hours and 48 hours. This is much more accurate than the 40 hour estimate you gave.

In this scenario, there isn’t necessarily a ground breaking difference between the range and the estimate of 40 hours. Most likely, you would have only been off by 8 hours in the worst case scenario. That isn’t catastrophic. The true power of this is much earlier in the process. The business almost always wants a more accurate estimate than what is possible very early in the process. It is your job to still give an estimate. Utilizing the Cone of Uncertainty, you can give the business an estimate while not giving out inaccurate information. You may be tasked with estimating a project that might be huge. Imagine a project that has complex requirements but very little detail. You look at the requirements and consider it will take about 3,120 hours (6 months for 3 developers at 40 hours a week). With that said, if you give that number to the business, they won’t have an accurate view of the true level effort. Really, because you are so early in the process, the amount of time it may take would range between 780 hours (6.5 weeks for 3 developers) to 12,480 hours (2 years for 3 developers) – the result of being off by a factor of .25 to 4. By giving this range it is very clear to the business that more requirement gathering is necessary to bring the estimate into a more accurate range.

With estimation, there will be some push back from time to time. There will come a time where someone is going to come to you and say “can you lower these estimates a bit?” This is actually a very reasonable thing to say. Remember, it is the project manager’s/product owner’s job to get the most productivity possible out of the development team. They should be pushing you for the best level of productivity possible. With that said, as a professional, it is your job to turn to them and say “no!” The truth of it is, if you say “yes”, you were lying about the original estimate. As a professional, you should never pad your estimates.

The real challenge is how you handle the followup question. It is very typical for the project manager/product owner to turn around and say “well, can you try?” Your only option here is to answer truthfully, “No!” If you say you will try, what you are really saying is “go away!” However, what they hear is “yes, I will have it done quicker.” This is a big problem and a breakdown in communication.

Remember, sometimes a professional has to give information people don’t want to hear. Developers typically aren’t the most social people, but having good social and communication skills will be crucial to advancing your career. Work on being able to communicate with confidence so you can give the information you need to give to the right people when the time comes. They will respect you for it and have more trust in you. Ultimately, they realize you are the expert. When you give your opinion, they will listen. If they don’t, it says more about them then it does you. Maintain your confidence and help guide the business with your expertise, even if you have to tell them something they don’t want to hear.

Technology is contstantly advancing. Our industry demands we constantly learn, especially considering Salesforce contains three releases a year. I personally try to follow a 40/20 rule. 40 hours a week I dedicate to my job and my employer and 20 hours a week I dedicate to my career and myself. Anything that my employer wants me to do I will do to the best of my ability. If I go in tomorrow and find out that I now need to develop in Fortran, I will do just that and I will do it to the best of my ability. However, I will also go home and spend 20 hours a week learning something much more progressive, such as Apex or Visualforce.

Maintaining this aspect of professionalism is crucial to my long term success as a developer. In this industry, if you aren’t actively learning, you are falling behind. Advancements in our field are happening at a staggering rate. It is impossible to keep up with everything, but you should be actively reading and working with different languages to expand your skill set. At some point, the industry will shift. Don’t find yourself stuck in the past and out of work.

I would like to give credit to the above book, The Clean Coder: A Code of Conduct for Professional Programmers by Robert Martin (@unclebobmartin) for heavily influencing my presentation and this article. Robert Martin, aka Uncle Bob, has been a huge positive influence on my career. This is an amazing book and I highly suggest it for anyone who is interested.

Next week, I’ll review the second half of my presentation on Clean Code. I’ll dive into the code examples and explain key factors to consider when developing on the Force.com platform.

I hope you enjoyed the read. However you decide to do it, remember it is your responsibility to everyone to be a professional. Thank you!

Important Note: It is important to remember that these thoughts are my personal opinion. As with any opinion, it may or may not reflect the opinion of any organization I am associated with.